Just putting that out into the universe because I need it. Thank you, AI gods!

Category: Other Page 64 of 177

I’ve been converted as of v5. I always hated the style before, I felt like every time you looked at v4 and lower, it always had that “Midjourney” look which didn’t look bad, I just didn’t like it. Now with v5 that’s gone and I freakin’ love it!

Two recent pieces:

- Hippy Putin (Imgur backup)

- Ted Cruz, Communist Hunter (Imgur)

- Trump, One of the Best At Catching Footballs (Imgur)

- Anderson Cooper is Jack Ryan

Compared to Adobe Firely, MJ v5 makes it look like the mediocre offering that it is. I have a hard time after this experience thinking it would be fun to go back to Dall-E or Stable Diffusion either, but I suppose the new thing eventually loses its sparkle too… More on MJ later.

You know it’s important and hard-hitting when both BroBible and Unilad are carrying it (possibly originating with the Daily Star?). Terminator director James Cameron is quoted as saying:

“AI could have taken over the world and already be manipulating it, but we just don’t know because it would have total control over all the media and everything. What better explanation is there for how absurd everything is right now? Nothing makes better sense to me.”

I’ve written about this before vis-a-vis the AI hegemony, that we’ve already been effectively living under AI rule via social media platforms for more than a decade culturally, globally. So, it seems like the inevitable throughline of that is one day we dispense with the charade of the old systems and tilt headlong into overt AI rule.

In fact, this is pretty much the plot of my second (manually-written) book, Conspiratopia (a novella), in which a young unwitting conspiracy dude is drawn into a web of lies after being exposed to the AI Virus by seeing an ad on social media (see also).

In a way, I think this also connects to what Jaron Lanier was talking about w/r/t social media platforms driving users (probably unintentionally, but who knows) towards a sort of composite personality that expresses all the foibles and fragility built into the technology itself.

Also, if you follow the logic of sci-fi author Charles Stross, who claims that corporations are “slow motion” AIs already, then we’ve been under the thumb of these entities for hundreds of years already…

I’ve used a loooot of AI image generators by now. I’ve gone whole hog on this just about every day since August of last year. Which is why it’s shocking to me huge companies like Microsoft & Adobe don’t seem to have picked up on a few basic and absolutely essential features for generative AI image results pages:

- Click to enlarge image results

- No, clicking and having it enlarge on its own URL/page doesn’t count (sorry Bing!). Just let me click on it quick to see it bigger and click out of the modal to have it go back to regular thumbnail. This helps me know if I want to download.

- Flip through image results using left right arrows

- OpenAI is actually the only one I’ve used that does this, and it’s so basic I can’t believe they don’t all copy it.

- One-click download from any view

- This one is also quite simple: let me download it with a one click button (not a multi-click menu option) no matter the view I’m in

- Download them all

- An option to download all results at once

- Image filenames reflect source model & prompt text & date

- Self-explanatory

There I said it. Bing’s image creator now says “powered by Dall-e” and news articles quoted Microsoft as saying they’re using the “latest version” which people on Reddit are saying is the new experimental Dall-e version.

I tried Adobe Firefly and the results were okay, but the UI is… kinda bad & confusing how it works. The results were pretty so so, but at least different in style than the others. I do still appreciate Adobe did theirs based on images they have licenses to use.

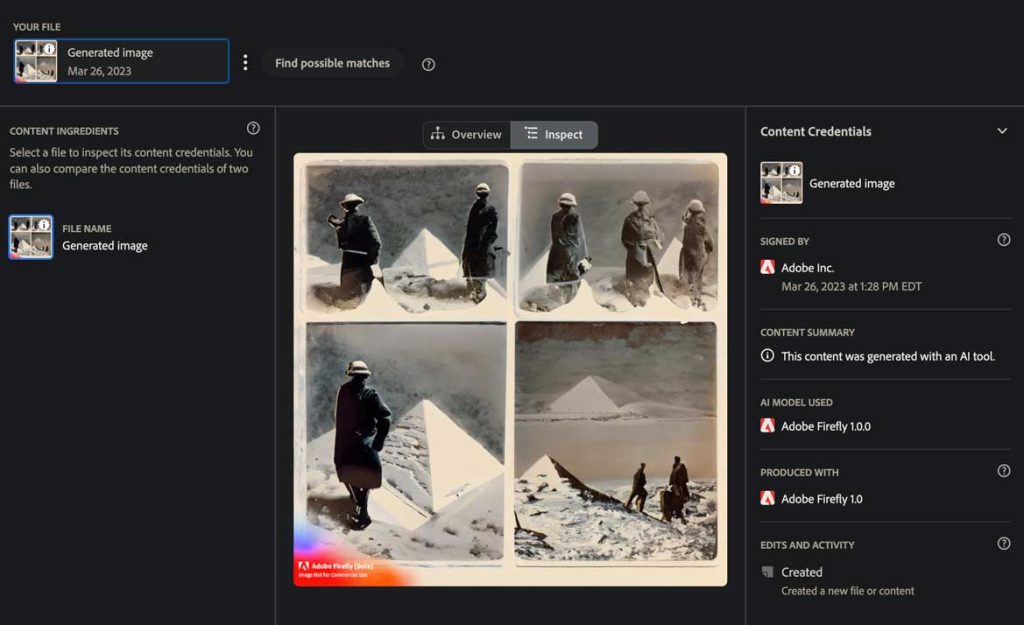

I wrote already about Adobe’s new implementation of Content Credentials and finally just got access to Firefly, their new image generation tool. Curiously, all you have to do to remove the content credentials is open the image(s) in Photoshop and resave them (making sure it’s still a JPG).

Poof!

No more content credentials.

To test this out yourself, upload your original Firefly image here (this is Adobe’s tool). Then save your image in Photoshop & re-upload it. For your original uploads direct out of Firefly, you should see something like this:

In the sidebar, you see it says “Generated image” along the the date, AI model used, the signer (Adobe), and a summary that says “This content was generated with an AI tool.”

If you do that with the same file after saving it in Photoshop, you will instead get the message in the right sidebar:

No Content Credentials attached

There are no Content Credentials attached to this file.

Presumably the cause of this is I have Content Credentials turned off in Photoshop, which is resulting in not just a gap in the data, but the data being stripped out altogether. That seems a little weird, unless you don’t want this data attached to your files. Then this is good news, because it means it’s relatively easy to get rid of. You could write a macro or make a batch action or whatever to resave files in a folder, and you’re done.

Bonus: Remove watermark with content-aware fill

While you’re at it, if you don’t want that watermark to appear, the answer is quite easy. Just make a lasso around it, and then go to Edit > Content-aware fill.

Poof!

You’re done. It’s not always quite perfect (and feathering your selection edge might be in order), but it does a decent job. Now you can add that to your batch action to remove content credentials.

I know it’s early yet, but if both of these are so easy to remove, it’s worth making them optional, so users who don’t want them don’t have to jump through hoops for no real reason.

I also keep thinking about this quote from the Jaron Lanier Guardian piece:

As for Twitter, he says it has brought out the worst in us. “It has a way of taking people who start out as distinct individuals and converging them into the same personality, optimised for Twitter engagement. That personality is insecure and nervous, focused on personal slights and affronted by claims of rights by others if they’re different people. The example I use is Trump, Kanye and Elon [Musk, who now owns Twitter]. Ten years ago they had distinct personalities. But they’ve converged to have a remarkable similarity of personality, and I think that’s the personality you get if you spend too much time on Twitter. It turns you into a little kid in a schoolyard who is both desperate for attention and afraid of being the one who gets beat up. You end up being this phoney who’s self-concerned but loses empathy for others.”

(Via Ran Prieur)

There’s a lot to unpack here, and I’ve tried a little to do so in some of my AI lore books, especially The Gestalt Minds and Inside Princeps. My versions veer off into sort of pulp sci fi explorations of some of those themes. It makes me think of a storyline, what if the purpose of apps was to make & regulate composite/collective human personalities, rather than sort of an accidental (maybe) byproduct of bad design choices?

Highly personalized AI would certainly be an ideal vector in a story like that, the gaslighting AI app that functions completely different for different people, but is slowly merging them into hyper-personalities…

Pretty good, though short & sort of unfocused article about Jaron Lanier’s hot take on AI causing mass insanity more so than killing us outright. Happen to agree with that & just wanted to capture this quote for future reference.

Lanier says the more sophisticated technology becomes, the more damage we can do with it, and the more we have a “responsibility to sanity”. In other words, a responsibility to act morally and humanely.

Adobe recently released V1 of its own suite of generative AI tools called “Firefly” (waitlist only for now – I don’t have access yet, but managed to piece together this information). Designed to integrate with Adobe’s existing apps, Firefly enables users to create generative AI images. Adobe has also incorporated “Content Credentials” into its AI-generated image outputs, asserting that the content was produced by AI (see the screenshot above).

Content Credentials are Adobe’s in-house implementation of the Coalition for Content Provenance and Authenticity (C2PA) standard. The C2PA is an open industry initiative focused on developing standards for tracing the provenance and authenticity of web media. By incorporating Content Credentials into Firefly, Adobe aims to promote transparency and trust in the AI-generated media space.

As part of the Content Authenticity Initiative (CAI) metadata, Adobe has introduced a custom assertion: com.adobe.generative-ai. This assertion helps establish that the content was generated using Adobe’s Firefly AI tools.

A new addition to the C2PA standard—digitalSourceType: trainedAlgorithmicMedia—will further complement Adobe’s efforts in the AI-generated media space. This new classification highlights the role of trained algorithms in generating media content, further establishing transparency.

Although social media platforms have yet to widely adopt the Content Credentials system, end users can verify content credentials of files on Adobe’s website at https://verify.contentauthenticity.org/ to see how the records work. This allows users to understand the provenance of AI-generated media and promotes transparency. You can also use Content Credentials currently in Photoshop.

Adobe has taken measures to ensure that the training data for its AI-generated images is curated from Adobe Stock and other reliable sources. According to the Firefly site, the curation process aims to mitigate harmful and biased content while respecting artists’ ownership and intellectual property rights (read more on CNET).

I wrote recently about coding an app using ChatGPT w/ the GPT-4 model. It’s a utility I’m calling EncycGen, which generates encyclopedia style entries via the OpenAI API.

Here are two recent books I wrote using this app. It’s buggy, the software, but it works in a rough and tumble “good enough” kind of way for my purposes.

First is Tales from the Mechanical Forest, part of the “Tales” series.

It elaborates on a story in one of the first four issues (I forget which, offhand) of the print paper The Algorithm, which ultimately was the genesis for these AI lore books (or one of them, anyway). In this world, there are huge mechanical trees which suck carbon out of the air more effectively than “real” trees. This one is based on actual projects people are undertaking even today IRL. The whole thing sounds so dystopian, it blows my mind!

And here’s the next book made with EncycGen, and a couple of other tools, including running those outputs back through GPT-4 for clean-up and normalization. This one is called Tales of Shelvin Parz.

Shelvin Parz today is but a little known ancient Quatrian magician, but in his time was one of the best known figures in all of that land. You can read a bit more about Shelvin Parz here.

The Shelvin Parz book marks a milestone in the AI lore book series: it is #75!